What are the ImportFromWeb features?

ImportFromWeb comes with some great features for a powerful and efficient web scraping from Google Sheets, including:

- Cache control to always keep control over your ImportFromWeb usage

- Javascript rendering: ImportFromWeb successfully scrapes data even from complex web pages, such as JavaScript-rendered websites

- Location based content scraping, so you decide the IP address to scrape data from

- Mass web scraping, so you scrape seamlessly hundreds or thousands of URLs at the same time

- Automatic updates, so you update your data on a regular basis (even when you’re offline)

- Function monitoring to control and understand the data scraped

- Auto-refresh, so you don’t have to relaunch the =IMPORTFROMWEB() functions manually

- IP Rotation: ImportFromWeb uses proxy servers to fetch web pages from different IP addresses so that pages load correctly, every time

- Number formatting: ImportFromWeb automatically applies number formatting to data, including currency, date and time

- Templates catalog, so you can enjoy all ImportFromWeb’s ready-to-use solutions designed for Amazon, Google Maps, Google Search, YouTube, Instagram, Yahoo Finance, …

What websites can I scrape with ImportFromWeb?

With ImportFromWeb, you have the power to scrape data from any website! Whether it’s a simple static page or a dynamic site loaded with JavaScript, ImportFromWeb can handle it all.

Using XPaths or CSS selectors, you can easily extract the data you need from any website. From e-commerce platforms to news sites, blogs, and more, the possibilities are endless. Just specify the elements you want to scrape, and ImportFromWeb will do the heavy lifting for you.

However, it’s important to note that ImportFromWeb is designed to scrape data from public pages only. Please respect the website’s terms of service and ensure that you’re scraping within legal and ethical boundaries.

Can I extract data from a page that requires login?

ImportFromWeb doesn’t have the ability to login to a website.

However, users with technical skills may be able to use the function to extract data from private pages: like ImportJSON, ImportFromWeb accepts a cURL request as first parameter. You might be able to copy the full cURL request from the Chrome Developers tool and use it in your IMPORTFROMWEB function.

Beware that, by signing up to a platform, you may have accepted the platform’s terms and conditions. Check carefully that using a tool like ImportFromWeb

Can I scrape data from JavaScript-rendered websites?

ImportFromWeb is designed to handle JavaScript-rendered websites with ease.

With the js_rendering option, you can effortlessly scrape data from websites that rely on JavaScript to load and display content. It’s a powerful feature that enables you to access even more data-rich websites and gather the information you need. If you’re wondering how to use this feature, we’ve got you covered! Check out our comprehensive guide on how to retrieve content from JavaScript-rendered websites.

Do I need to know XPaths to use ImportFromWeb?

No! You can use CSS selectors instead.

While XPaths offer more flexibility and allow more complex queries, CSS selectors are known by most people who have basics in HTML/CSS.

If you are unsure of how to use CSS selectors, you can read this excellent guide from CSS-Tricks

When is data updated?

Your data is updated when the cache expires or when you choose to update it either with a manual update using the RUN button or through a trigger to automate it.

Check out our full guide on how to control when the data updates.

What is Cache control?

Fetching the same content constantly is not efficient. That is why ImportFromWeb caches the source code once loaded with the expected data.

By default, ImportFromWeb cache has a lifetime of 1 week and you can extend it up to 4 weeks.

Can I scrape location-based content?

Yes, you can! With the powerful options of the IMPORTFROMWEB() function, you can scrape location-based content using the country_code option. This allows you to control the location, and our bots will fetch the webpage from an IP address of the specified country. For more details, check out our guide on how to scrape location-based content.

Does ImportFromWeb scrape from my IP address?

Rest assured, ImportFromWeb does not scrape websites from your IP address.

We prioritize your privacy and employ a sophisticated system that utilizes proxy servers. These servers fetch web pages on your behalf from various IP addresses that rotate regularly. This means that the website you’re scraping won’t have access to your personal IP address.

Can I be blocked by a website for scraping it?

When you use ImportFromWeb, your IP address remains completely anonymous.

How? ImportFromWeb cleverly employs proxy servers to fetch web pages from different IP addresses that rotate regularly. This ensures that your scraping activities stay under the radar and helps minimize the risk of getting blocked by websites.

What is the difference between ImportFromWeb and ImportXML?

ImportFromWeb is a user-friendly alternative to Google Sheets’ native IMPORTXML function, offering enhanced functionality and resources for web scraping.

- Web scraping efficiency: ImportFromWeb works seamlessly with hundreds of formulas per sheet, while IMPORTXML may become slow and buggy with multiple formulas.

- Website compatibility: ImportFromWeb allows you to scrape any website, including those rendered in JavaScript, whereas IMPORTXML doesn’t support JavaScript-rendered sites.

- Single formula for multiple URLs: ImportFromWeb enables you to use one formula to extract data from multiple URLs, while with IMPORTXML, you need a separate formula for each URL.

- CSS Selectors and xPath Queries: ImportFromWeb supports both CSS selectors and xPath queries, providing flexibility in data extraction. IMPORTXML only accepts xPath queries.

- Ready-made solutions: ImportFromWeb offers ready-made solutions for popular websites like Amazon, Google, and Yahoo Finance, simplifying the scraping process. IMPORTXML requires users to find the xPath queries themselves.

- Automation and monitoring: ImportFromWeb allows you to schedule updates and monitor data automatically, ensuring timely and accurate scraping results.

Overall, ImportFromWeb provides a more powerful and user-friendly experience for web scraping, making it an excellent alternative to IMPORTXML.

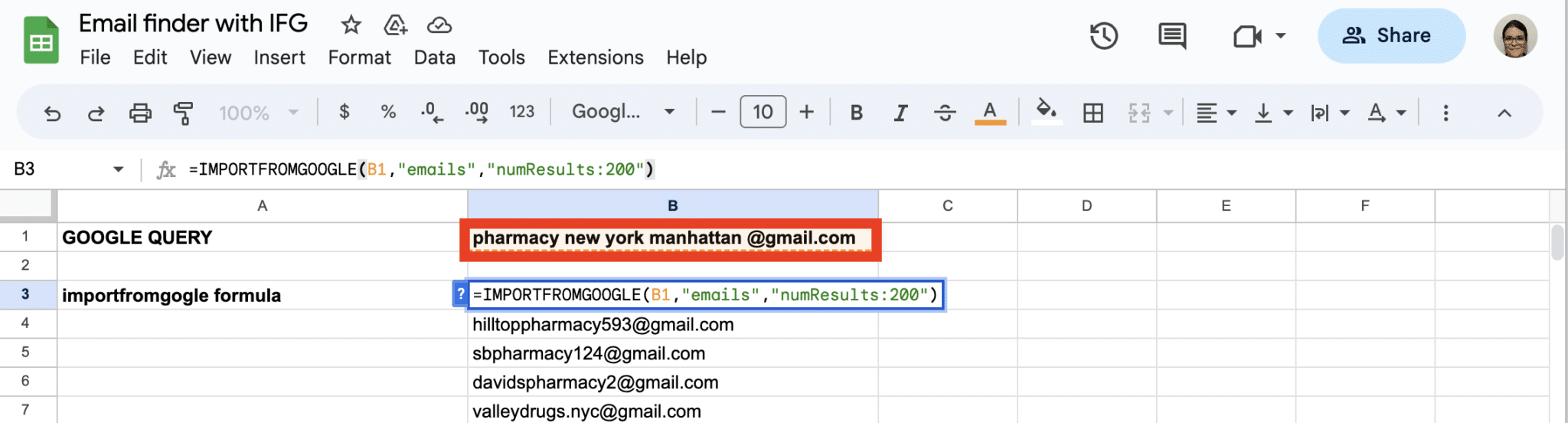

Is there a way to extract emails from websites?

Absolutely! With ImportFromWeb, you can extract emails from websites using our list of generic selectors, which includes emails.

Here’s the formula you have to write: =IMPORTFROMWEB("https://www.example.com", "emails")

Just bear in mind that while these selectors are designed to work with a wide range of websites, the success of email extraction may vary depending on whether the websites follow general guidelines for design and data presentation.

Another way of getting emails is to use the IMPORTFROMGOOGLE function to perform a boolean search and extract the emails: =IMPORTFROMGOOGLE("search_terms","emails")

Can I scrape hundreds or thousand pages with ImportFromWeb ?

ImportFromWeb has been designed to work efficiently with hundreds of pages at a time, and it can handle up to 4,000 pages in a single spreadsheet.

Please note that Google Sheets can handle 30 functions simultaneously. Thus, processing time increases with a higher number of URLs to scrape, but ImportFromWeb is well-equipped to manage these demands effectively.

For tips on efficiently scraping hundreds or thousands of URLs with ImportFromWeb, we recommend checking out our resource: Our Tips for Mass Web Scraping with ImportFromWeb.

Is it possible to scrape data from multiple pages that retain the same URL?

No, it is not possible to scrape data from multiple pages that retain the same URL using ImportFromWeb. ImportFromWeb is designed to scrape content from a specific URL, and it cannot perform actions on webpages, such as navigating to the next page.

When webpages share the same URL for multiple pages of content, ImportFromWeb will only scrape data from the initial URL provided in the function.

Does ImportFromWeb work on Excel?

ImportFromWeb is currently compatible with Google Sheets only and does not work directly with Excel. However, there’s good news for Excel users who want to benefit from web data scraping.

You can easily export data from Google Sheets to Excel. Here’s how you can do it:

1. In Google Sheets, open the document containing the scraped data.

2. Go to the “File” menu.

3. Select “Download” and choose the format you’d like to export to, such as “Microsoft Excel (.xlsx).”

The data will be downloaded as an Excel file, which you can then open and use in Microsoft Excel.