With our custom-built function, ImportJSON, you can easily scrape and extract data from Twitter. There are so many insights you can get from one of the most used social media in the world.

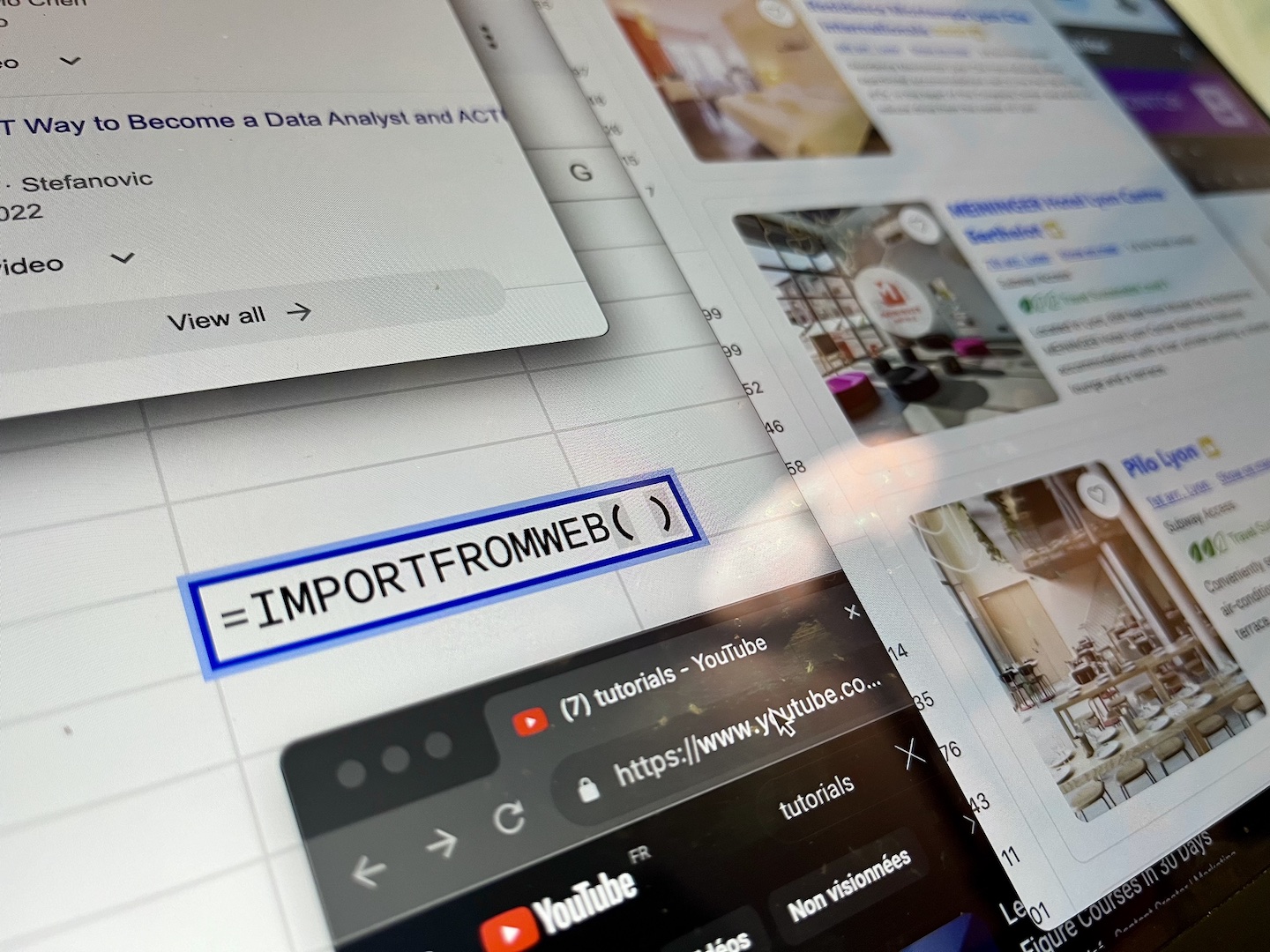

In this guide, we’ll explain how you can scrape useful data in a glimpse of an eye without having to worry to write complex code. It will be as easy as using a regular Excel function!

How does it work?

Our function – ImportJSON – is built on top of Google Sheets to extend its functionalities, can extract any element available from an API. It allows you to extract directly in Google Sheets any data you may need for your analysis.

We are going to leverage Twitter’s public API to extract the data we need for this tutorial. Don’t worry if you never worked with an API, we will guide you through the process and doing it by yourself will be like cutting through butter.

What are we going to scrape from Twitter?

One of the most common data we want to scrape from Twitter are the metrics associated with a list of profiles, like the number of followers. The only thing you need is a list of Twitter user profiles you want to retrieve data from, and our formula will take care of the rest.

Get the user ids for the profiles you want to scrape from Twitter

The first step to scrape data from a list of Twitter profiles is simply to get the list of Twitter IDs. You can find it simply looking at the URL displayed in your browser.

Alternatively, you can also have a look at the public profile and extract the Twitter handle, removing the @ located at the beginning.

Include them in a Google spreadsheet and create URLs to scrape

Once you have defined all the profiles you want to scrape, include them in a Google spreadsheet and create the Twitter URLs we’ll use to scrape data.

One question may arise at this point: why don’t we use directy the Twitter URL? Because based on how you will retrieve the list of profiles you want to extract data from, you may end up having only their Twitter handle. In this case, you need to recreate the URL yourself.

That’s why we decided to add this extra step in our tutorial, so everyone can follow.

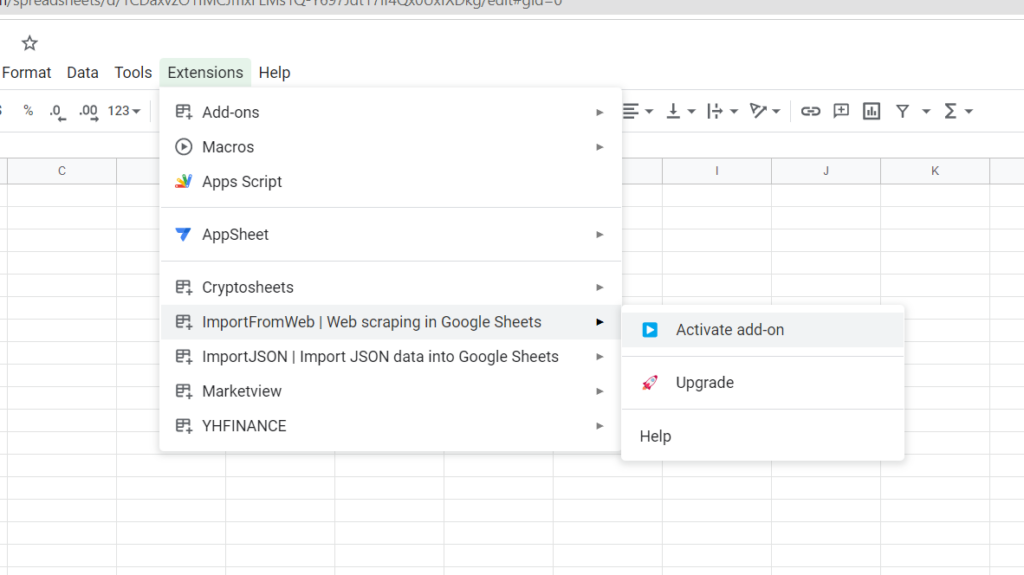

Install and activate ImportJSON

To use our custom formula, you need to install it from the Google Marketplace and then activate it under Add-ons > IMPORTJSON > Activate add-on.

This step is mandatory to be able to use the new formula.

Generate a gt parameter

To scrape easily data from your Twitter profiles, you need to generate a gt parameter. How can you do that?

- Install the EditThisCookie plugin. It is only available for Chrome but you can easily find equivalents if you use another browser.

- Go to Twitter without being logged

- Open the EditThisCookie extension menu and copy the value of the gt parameter

Create the Twitter URLs appending the gt parameter

Using the same logic explained at step 2, append the gt parameter to your URL to end up with something like: https://twitter.com/michelleobama?gt=1523973489157357568

Define the data you want to extract and include them in your Google Sheets

Using this method, you can extract a lot of data from Twitter linked to a specific account. Here are the most common, with their associated identifier:

- Date of creation: data/user/result/legacy/created_at

- Profile description: data/user/result/legacy/description

- Followers: data/user/result/legacy/followers_count

- Followings: data/user/result/legacy/friends_count

- Location: data/user/result/legacy/location

- Number of Tweets: data/user/result/legacy/statuses_count

You can scrape more data, but these are the most common ones that we wished to include in this tutorial. Add these identifiers in your Google Sheets (for example as shown below) because you’ll need to reference them in the formula we are going to use.

Scrape the data

We are now ready to scrape the data using the following formula:

=IMPORTJSON(urls_to_scrape;what_to_scrape;filters)

The filters are optional but in this case, we need them because we want to retrieve the data without any header. Just include the following information in your Google Sheets and reference these cells in the third part of the formula

In our example, if you use =IMPORTJSON($C3;D$2;$A$15:$B$15), you will extract the data highlighted in blue. If you then drag the formula, you’ll be able to scrape everything you need.

You can now scrape the data from Twitter for the profiles you want to watch closely, and even schedule execution to detect changes. How cool is that?

Did you find this tool useful?

Has it helped you? We’d love to hear all of your feedback so that we can keep providing the best information on web scraping!